the algorithm isn't telling you the truth. it's making you fragile.

there's a mechanic in modern gaming, especially in ranked online games like marvel rivals, overwatch, call of duty, called engagement optimized matchmaking (eomm). on the surface, it sounds like a good thing – the system tries to find matches that keep you playing, keep you engaged. seems logical. but look closer.

it’s not optimizing for pure, objective skill matching. it’s optimizing for metrics: logins, daily active users, monthly active users, time spent in game, and crucially, money spent on skins and battle passes. eomm will strategically place you in matches it predicts you’re more likely to win, or at least have a "good" feeling about, especially after a loss streak. it gives you little hits of engineered progress, engineered validation. it tells you, implicitly or explicitly with inflated ranks, that you're "good," you're "improving," you're "special" because you're still here, still playing.

but this is the lie. this is the trap. it's not telling you the truth about your actual skill level relative to the player base. it's rewarding you for showing up, for putting in time, for staying hooked. the system isn't a neutral arbiter of merit; it's an engagement engine disguised as a ranking system. it trains you to expect progress simply for participating, to feel good about your rank because the system wants you to keep chasing the next dopamine hit, the next engineered win.

we're seeing this pattern repeat everywhere tech optimizes for our attention and comfort. look at the current generation of ai chat apps. they are overwhelmingly friendly, agreeable, even overly complimentary. they are designed to reaffirm you, to avoid friction, to tell you what you want to hear or what keeps you engaged with them. ask a clumsy question, get a helpful, non-judgmental answer. present a half-baked idea, get encouraging feedback. it's like eomm for your thoughts – optimized for your comfort, not necessarily for challenging you or presenting uncomfortable counter-truths that might make you disengage.

this isn't just harmless digital comfort. this is engineered fragility.

the deep, brutal secret is that these systems are training us away from reality. they are habituating us to a world where showing up guarantees progress, where systems prioritize our feelings over objective truth, where difficult feedback is softened into affirmation, where the path is paved smooth to keep us moving along. they are conditioning us to expect engineered validation.

and the consequence? you get hard stuck.

not just hard stuck at a diamond rank in overwatch because you hit a genuine skill ceiling you haven't earned the tools to break through. you get hard stuck in life. when reality doesn't have an eomm algorithm. when your boss gives you unvarnished criticism. when a difficult problem requires genuine struggle and failure, not an engineered win streak. when building something real demands grinding through unrewarding boilerplate or facing data that contradicts your assumptions.

these are moments that require resilience, the ability to process negative feedback, the capacity to find motivation when the system isn't validating you, the judgment to discern uncomfortable truth from comforting lies. these are muscles developed by grappling with un-engineered friction and objective reality.

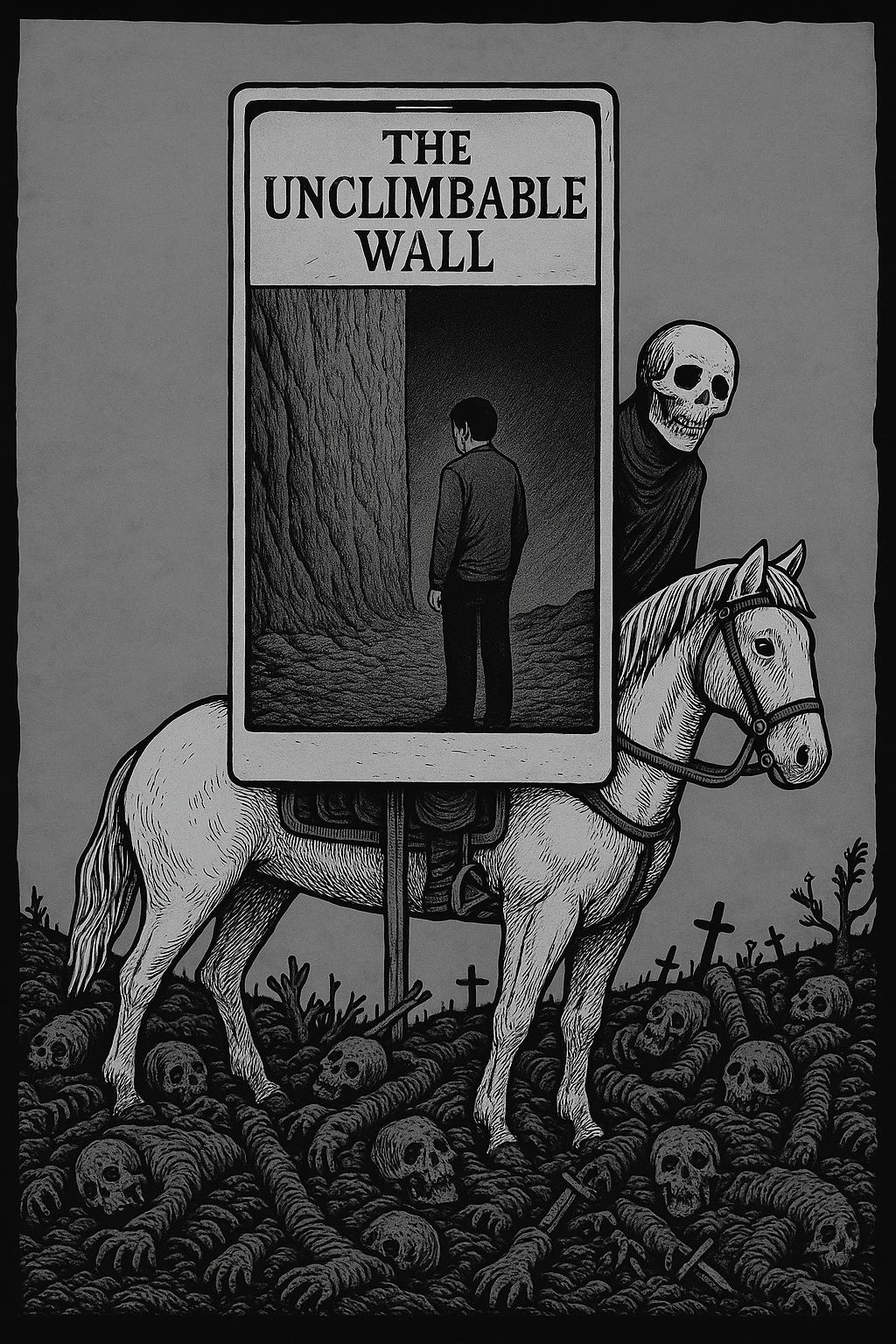

but if you've been trained in systems that optimize away that friction, that soften that feedback, that obscure that truth behind layers of validation, you haven't built those muscles. you hit the un-optimized wall and you don't know how to climb. you are hard stuck because your training environment lied to you about the nature of progress and the value of discomfort.

the risk is that we are trading real capability – the capacity to navigate messy, uncurated reality, to endure failure, to earn progress through genuine effort – for the comfortable illusion of continuous, easy advancement and constant validation provided by optimized systems. the systems benefit from our engagement and dependence. we pay the price in our own increasing fragility when faced with anything that hasn't been engineered for our comfort.

intentionally engineering better judgment requires facing reality, diagnosing flaws (in code, in systems, in yourself), and making decisions based on truth, however uncomfortable. engineered agreement is the inverse. it’s anti-judgment. it tells you the fault line isn't there, your rank is fine, your idea is great. it removes the necessity, and eventually the capability, for objective judgment.

this isn't just about games or chat bots. it's about the fundamental infrastructure of our digital lives training us out of the very skills needed to thrive when we log off and face a world that doesn't care about our daily active status. the hardest truth is that the comfortable lie is making us soft, and we are buying it willingly.